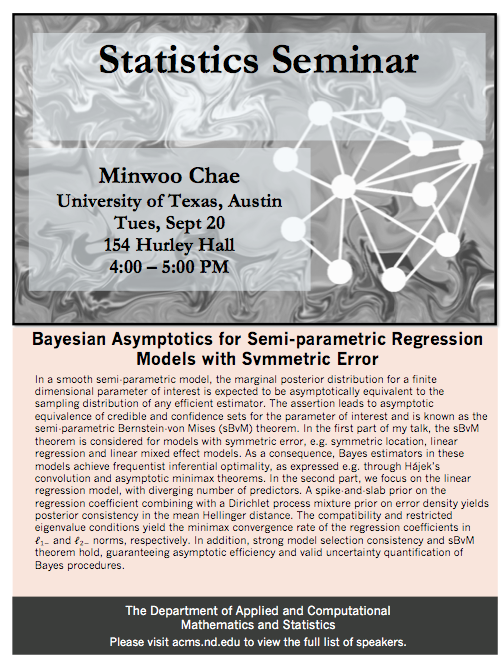

Minwoo Chae

University of Texas, Austin

Department of Mathematics

4:00 PM

154 Hurley

Bayesian Asymptotics for Semi-parametric Regression Models with Symmetric Error

In a smooth semi-parametric model, the marginal posterior distribution for a finite dimensional parameter of interest is expected to be asymptotically equivalent to the sampling distribution of any efficient estimator. The assertion leads to asymptotic equivalence of credible and confidence sets for the parameter of interest and is known as the semi-parametric Bernstein-von Mises (sBvM) theorem. In the first part of my talk, the sBvM theorem is considered for models with symmetric error, e.g. symmetric location, linear regression and linear mixed effect models. As a consequence, Bayes estimators in these models achieve frequentist inferential optimality, as expressed e.g. through Hájek’s convolution and asymptotic minimax theorems. In the second part, we focus on the linear regression model, with diverging number of predictors. A spike-and-slab prior on the regression coefficient combining with a Dirichlet process mixture prior on error density yields posterior consistency in the mean Hellinger distance. The compatibility and restricted eigenvalue conditions yield the minimax convergence rate of the regression coefficients in ℓ1- and ℓ2- norms, respectively. In addition, strong model selection consistency and sBvM theorem hold, guaranteeing asymptotic efficiency and valid uncertainty quantification of Bayes procedures.

Full List of Statistics Seminar Speakers