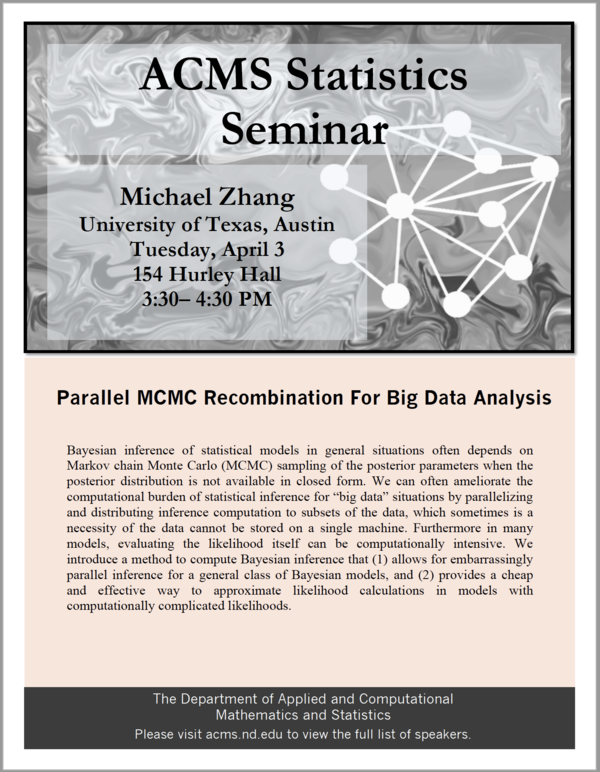

Michael Zhang

University of Texas, Austin

3:30 PM

154 Hurley Hall

Bayesian inference of statistical models in general situations often depends on Markov chain Monte Carlo (MCMC) sampling of the posterior parameters when the posterior distribution is not available in closed form. We can often ameliorate the computational burden of statistical inference for “big data” situations by parallelizing and distributing inference computation to subsets of the data, which sometimes is a necessity of the data cannot be stored on a single machine. Furthermore in many models, evaluating the likelihood itself can be computationally intensive. We introduce a method to compute Bayesian inference that (1) allows for embarrassingly parallel inference for a general class of Bayesian models, and (2) provides a cheap and effective way to approximate likelihood calculations in models with computationally complicated likelihoods.

Full List of Statistics Seminar Speakers